In the previous essay, we focused on the architecture of the Open edX system. In this essay we will take a look at the quality and technical debt of the Open edX source code. We try to provide examples of coding guidelines and standards that are used by the developers working on the edX project and relate these with the actual code.

We also looked at the overall process of submitting new code to the Open edX platform and the different stages that developers have to go through before having their code accepted and run in the production environment.

Overview of the Quality Process

The Open edx platform takes several measures to ensure the quality of the contributions made to the software are held to a high standard. An entire developer guide is designated for directing contributors and asserting quality standards for contributions 1.

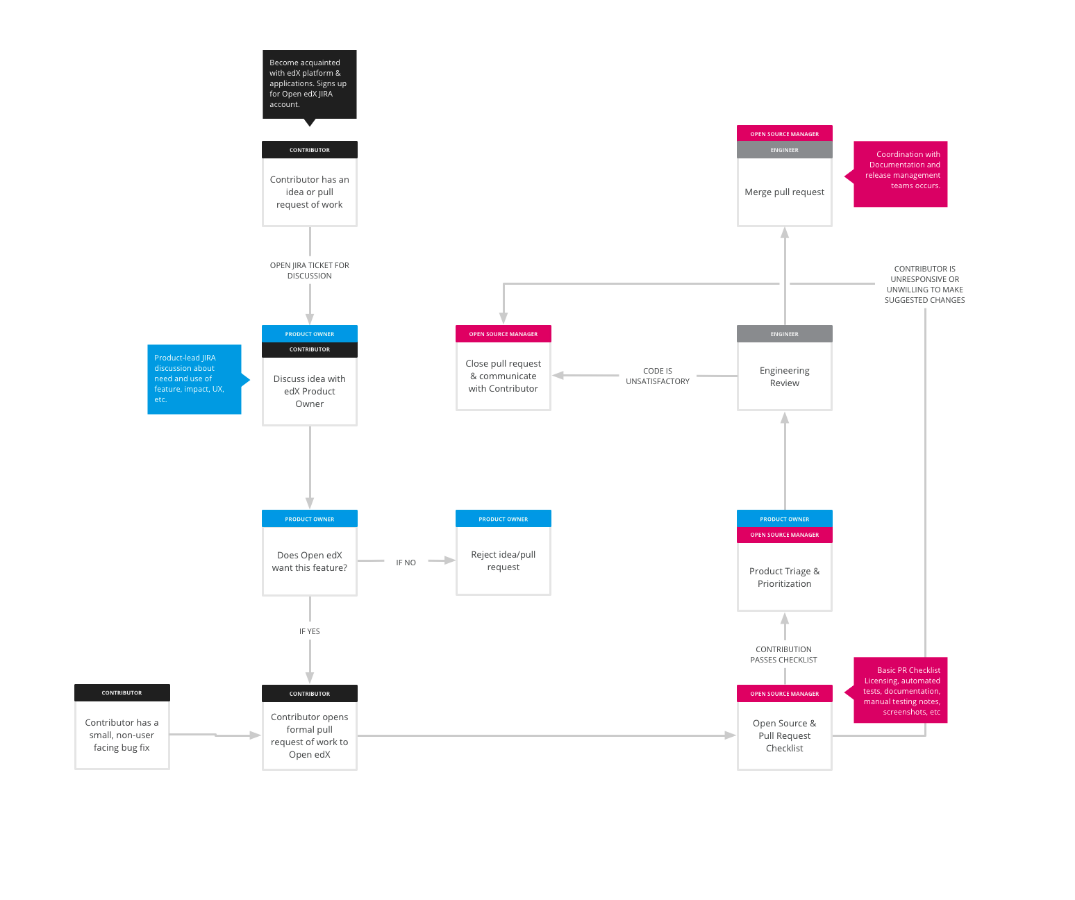

A process facilitates high quality of contributions and follows a general scheme:

A contributor has to preferably contact Open edX as early as possible during the design cycle of a feature in order to be given guidance and to find out if the feature is already being worked on. Previous contact made and approved will likely have an impact on the pull request acceptance, and time it takes to review.

The figure below shows the intermediary steps in the process of a pull request acceptance.

There are a number of roles in the code acceptance process:

- Core committers: Individuals responsible for accepting a pull request and upholding the quality standards.

- Product Owners: Prioritize the work of the core committers, depending on the features needed or requested.

- Community managers: Assure healthy development and communication environment.

- Contributors: Individual developers wanting to add or improve a feature.

The test processes

Once a pull request is made numerous automated tests are run. Testing is something that the Open edX project takes very seriously, a test engineering team is designated to deal with the testing infrastructure. When a new feature is created, two kinds of tests need to be created: general tests that evaluate the feature on the Open edX platform, and tests specific to the new feature. General tests include Django tests as well as acceptance tests, which verify behavior that relies on external systems. Open edX has a Jenkins installation specifically for testing pull requests. Before a pull request can be merged, Jenkins must run all the tests for that pull request: this is known as a “build”. If even one test in the build fails, then the entire build is considered a failure. Pull requests cannot be merged until they have a passing build. Code coverage is measured with the use of coverage.py for Python and JSCover for Javascript. The goal is to steadily improve coverage over time, hence a tool was written called diff-cover that will report which lines in a branch are not covered by tests. Using this tool, pull requests have a very high percentage of test coverage – and ideally, test coverage of existing code increases over time. If the code passes the automated tests, it is reviewed based on priority. If the coding standards and functionality are up to par it is accepted by a core committer and added onto the Open edx platform. If it is rejected for any reason, contact is made with the committer, and the reasons are discussed.

Coding hotspots and upcoming features

There are three components that are at the center of attention over the last few months:

- the LMS(Learning management system) module

- the CMS(Content management system) module

- the Common module

These are the three main hotspots for a high coding contribution frequency. Additionally the requirements directory and scripts are updated to support the overall development that occurs on the main components listed above.

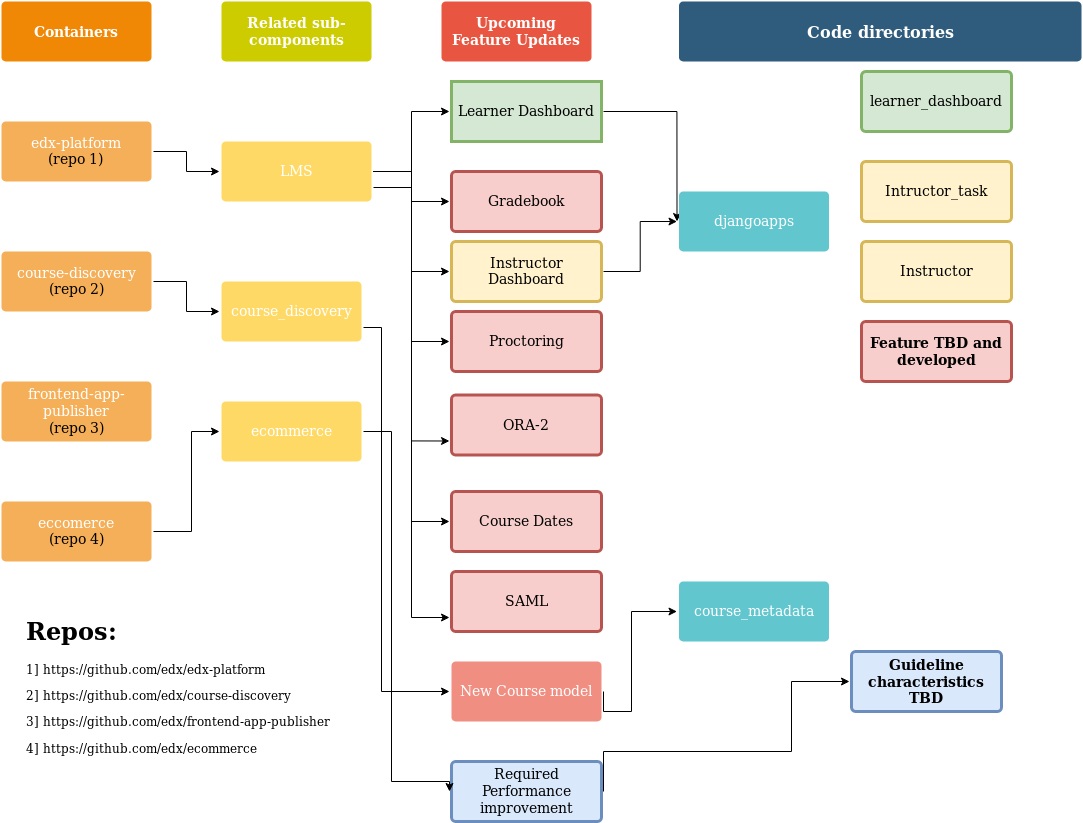

The following figure shows the system’s roadmap onto the system’s components as well as some of the upcoming features that are being developed 3 4 5 6.

SIG assesment

The quality and maintainability of our code were determined by the SIG platform 7 which measures a set of the system’s property ratings. These properties are volume, duplication, unit complexity, unit size, unit interfacing, module coupling, component balance and component independence. Open edX scored a pretty low score in duplication (1/ 5), meaning that identical fragments of source code can be found in more than one place in the product. As a result, the unit was also considerably large, thus scoring only 1.6/ 5. It goes without saying that the overall volume of the project was not ideal and only got 3.2/ 5. This negatively impacts the project’s analyzability and testability, since the diagnosis of faults or parts to be modified is more difficult or time-consuming. Testability is also involved, since more tests need to be created and maintained for a larger project, increasing the overall effort. The component that achieved the lowest scores in these categories was the Learning Management System (LMS). As it can be observed by taking a look at the roadmap, the architecture team plans to make a lot of changes in this specific model. Hopefully, these changes will reduce the liabilities. Another category where edX failed to score high, was the component entanglement. Component entanglement indicates the percentage of communication between top-level components that are part of commonly recognized architecture anti-patterns. Open edX only scores 1/ 5, and the main reason is the common_lib component. Currently there are no planned feature updates for the specific component. In all the other categories Open edX is above average with the highlight being a 5/ 5 score for both module coupling as well as component independence.

SIG recommendations

SIG platform offers refactoring suggestions that can improve a project’s score in the aforementioned categories. After using this feature we got the following results:

- Duplication: SIG shows all the parts where code is written more than once. Refactoring candidates are sorted by impact (Lines of duplicated code, times used). As our project achieved a low score for this metric, we can understand that several units are labeled as high-risk.

- Unit Size: SIG presents for each of the units their lines of code and the risk category for unit size, informing the user which units need to be shorter. As our project achieved a low score for this metric, we can understand that several units are considered high-risk.

- Unit Complexity: The user is shown the units with the greater McCabe index for the metric. Our project achieved a low score for this metric so we can understand that several units are labeled as high-risk.

- Unit Interfacing: For this metric the number of parameters is the most critical issue and again we can use SIG to locate which units need an improvement.

- Module Coupling: The Fan-In is the metric taken into account and for our system only a couple of modules are labeled high-risk.

- Component Independence: This metric is the one where Open edX achieved the highest score and there are only 16 modules that need to be “isolated”.

- Component Entanglement: SIG suggests that communication lines between specific components should be clearly defined and limited.

General coding standards

Moreover, the coding standards that are laid out by Open edx go well beyond merely writing clean code. They are derived from assessing the user potential requirements and enable those requirements. For instance many of Open edx users have some sort of handicap or impairment that require third person software in order to interact with the website. For third person software to function correctly certain requirements have to be directly represented in the code. These requirements can be found in the developer documentation guide: 8. Further provided coding standards involve support for right-to-left languages and using events and the event API inorder to track analytics. In the pull-request process, a core commiter will review the code to ensure it is up to par. In case of unsatisfactory code contact will be made with the developer. Through the discussions board developers can also be guided to write correct code.

Technical debt

Last but not least, an attempt was made to assess the technical debt 9 in Open edx. There are multiple causes of technical debt, and for that reason Open edx has teams that are devoted to identifying and solving these issues. Causes of technical debt include features that are entangled in one repo but are devoted to be used by several independent components. Such an example is the djangoapps/plugins which Open edx wants to refactor into its own repo. Another technical debt generator is dead code that adds clutter to the software that makes modifications slower. Another technical debt factor that appears in Openedx are different sources of drag, which can include an updated feature causing problems with other features that then need to be sorted out. For this project one the goals our team had was to help reduce the technical debt of the Open edX project. To this end one of our contributions for this project is going to be related to removing deprecated schema models, more specifically the schemas related to the student databse. This will therefore bring value to the overall code quality by reducing the underlying technical debt of the Open edX platform.

-

https://edx.readthedocs.io/projects/edx-developer-guide/en/latest/ ↩

-

https://edx.readthedocs.io/projects/edx-developer-guide/en/latest/_images/pr-process.png ↩

-

https://github.com/edx/edx-platform ↩

-

https://github.com/edx/course-discovery ↩

-

https://github.com/edx/frontend-app-publisher ↩

-

https://github.com/edx/ecommerce ↩

-

https://sigrid-says.com/softwaremonitor/tudelft-edxplatform/docs/Sigrid_User_Manual_20191224.pdf ↩

-

https://edx.readthedocs.io/projects/edx-developer-guide/en/latest/conventions/index.html ↩

-

https://openedx.atlassian.net/wiki/spaces/AC/pages/706183193/Architecture%2BDebt ↩